Artificial intelligence has split cybersecurity into two arms races running at the same time. Defenders using AI now detect breaches 108 days faster than those without and cut average breach cost by 43%. Attackers using the same technology have driven phishing volumes up 1,265%, and 82.6% of phishing emails now use AI in some form. Both things are true at once, which is what makes this difficult. The AI cybersecurity market hit $29.64 billion in 2025, heading toward $167.77 billion by 2035. This covers what AI actually does on both sides: the detection mechanics, the attack vectors, the cost figures, and which tools enterprises are running in 2026.

- AI-powered security detects breaches 108 days faster and cuts average breach cost from $4.44M to $2.54M.

- 82.6% of phishing emails now use AI; AI-generated phishing hits a 78% open rate and 21% click-through.

- Deepfake incidents surged 680% year-over-year; voice cloning now requires as little as 3 seconds of audio.

- IBM X-Force 2026: vulnerability exploitation caused 40% of all incidents, with AI accelerating attacker speed.

- The AI cybersecurity market grows at 18.93% CAGR, projected to reach $167.77B by 2035.

How AI strengthens cyber defense: detection, response, and cost savings

AI’s contribution on the defensive side is speed and scale. Human analysts can’t process the volume of telemetry modern networks generate — millions of events per hour across endpoints, cloud workloads, and user sessions. ML models can. The breach economics that flow from that gap are now quantified well enough to take to a board.

Real-time threat detection and automated response

Traditional signature-based detection matches known malware patterns. That fails against zero-days and polymorphic malware that changes its signature on each iteration. AI-based detection flips the model: it learns what normal looks like and flags deviations. An AI system monitoring network traffic can catch lateral movement — an attacker working from a compromised endpoint toward high-value servers — within minutes. Without AI, that same movement can go undetected for days.

2025 research puts the gap at 108 days faster detection versus traditional methods. IBM estimates roughly $18,000 in additional damage per undetected day. Do the math on 108 days: that’s approximately $1.9 million in avoided losses per breach event, before you count response and remediation costs.

The tools running this in enterprise environments: CrowdStrike Falcon uses an AI-driven threat graph for endpoint detection and response; Darktrace applies unsupervised machine learning to network anomaly detection; Microsoft Defender for Endpoint integrates behavioral AI across Microsoft 365. Different ML architectures, same core approach: model normal, flag abnormal, automate initial containment.

Behavioral analytics: catching insider threats before they escalate

External attackers get the headlines, but insider threats (malicious employees or compromised credentials operating inside trusted perimeters) cause outsized damage precisely because perimeter defenses don’t apply. User and Entity Behavior Analytics, UEBA, is the AI discipline built for this.

UEBA systems establish behavioral baselines for every user and entity on a network — servers, applications, service accounts. A financial analyst who downloads 500KB daily but suddenly pulls 50GB at 2 AM triggers an anomaly score, even if their credentials are valid and no malware signature is present. Organizations using AI-led incident response report a 70% reduction in response time versus manual workflows. The main platforms in this space: Splunk Enterprise Security, Vectra AI, and IBM QRadar. CISA has published specific AI use cases for insider threat programs in federal environments, which is useful for high-security implementations.

The financial case for AI security: $1.9M savings per organization

The ROI case has become hard to argue with. IBM’s Cost of a Data Breach report shows organizations deploying AI and automation extensively in security hit an average breach cost of $2.54 million, versus $4.44 million for those without. That’s 43% lower. Companies using AI security save an average of $1.9 million compared to non-users. 95% of security professionals say AI tools improve prevention, detection, response, and recovery.

The global AI cybersecurity market is growing at 18.93% CAGR, from $29.64 billion in 2025 to a projected $167.77 billion by 2035. Fortune Business Insights puts the CAGR higher at 21.71% from a $34.09 billion 2025 base. The methodologies differ, but every major research firm is pointing in the same direction.

AI-powered cyber attacks: phishing surges, deepfakes, and the IBM X-Force 2026 findings

The capabilities useful for defense — language generation, voice synthesis, pattern recognition, autonomous task execution — are equally available to attackers, with no compliance overhead, no budget cycles, and no organizational friction. The 2025–2026 threat intelligence data shows what that asymmetry looks like in practice.

Generative AI and the 1,265% phishing surge

Phishing has always been the highest-ROI attack vector because it targets the human layer rather than technical controls. Generative AI made it significantly worse. 82.6% of phishing emails now use AI in some form, and volumes surged 1,265% after generative tools became widely accessible.

LLMs eliminate the grammatical errors and cultural awkwardness that used to help recipients spot phishing attempts. They also enable personalization at scale, scraping LinkedIn profiles, earnings call transcripts, and social media to craft emails referencing real projects and real colleagues. AI-generated phishing now achieves a 78% open rate and 21% click-through rate, compared to roughly 20% opens and 2–3% clicks for legitimate marketing email. That’s not marginal improvement. It’s a different category of threat.

The Deloitte Center for Financial Services projects generative AI fraud in the US will reach $40 billion by 2027. IBM’s 2026 X-Force data attributes 37% of AI-assisted attacks to phishing — still the primary weaponization vector despite all the deepfake coverage.

Deepfake voice fraud: 680% growth and the 3-second cloning threshold

Modern voice cloning can replicate an executive’s voice from as little as 3 seconds of audio, readily sourced from earnings calls, conference presentations, or YouTube interviews. Attackers don’t need prolonged access. They need a public recording.

Deepfake incidents increased 680% year-over-year, with Q1 2025 alone recording 179 separate incidents. 53% of financial professionals reported attempted deepfake scams as of 2024. The detection problem is real: humans correctly identify AI-generated voices only 60% of the time, so attackers have better odds than a coin flip even when targets are actively trying to verify.

One documented case: Harvard Extension School cybersecurity experts described a company losing over $25 million in under 30 minutes, with the attacker impersonating a CFO via deepfake video during a multi-person call. The FBI’s 2025 IC3 report logged a 37% rise in AI-assisted business email compromise. CISA now classifies voice and video deepfakes as a critical infrastructure threat requiring dedicated detection protocols.

IBM X-Force 2026: AI accelerates exploitation at scale

The IBM X-Force 2026 Threat Intelligence Index documents how AI is changing the attack landscape at the infrastructure level:

- Vulnerability exploitation became the leading breach cause, accounting for 40% of all X-Force incidents in 2025. AI tools speed up attacker reconnaissance and exploit identification.

- Attacks against public-facing applications jumped 44%, driven by AI-enabled discovery of misconfigured authentication controls.

- Active ransomware and extortion groups increased 49% year-over-year — more fragmentation, not consolidation.

- Supply chain and third-party compromises nearly quadrupled since 2020. 70% of attacks now enter via vendors, not direct external breaches.

- Infostealer malware exposed 300,000+ ChatGPT credentials in 2025, putting AI platforms at the same credential risk as core enterprise SaaS, with the added risk of prompt injection and output manipulation.

16% of breaches involved direct attacker use of AI tools. Those breaches averaged $5.72 million in cost, versus the $4.45M overall average, reflecting how much faster AI-assisted intrusions move and how much harder they are to contain.

AI cybersecurity market 2026: size, leading platforms, and what comes next

Security spending on AI is growing across every segment. Mordor Intelligence puts the 2025 market at $30.92 billion with a 22.8% CAGR through 2030; Precedence Research models $167.77 billion by 2035. The generative AI security subset is growing faster — near tenfold expansion expected between 2024 and 2034.

Leading AI cybersecurity platforms by category in 2026

The tool market has organized around four functional categories. For endpoint detection and response: CrowdStrike Falcon, SentinelOne, Sophos Intercept X, and Microsoft Defender for Endpoint all run on-device AI to detect and contain threats before lateral spread. SIEM and SOAR platforms (Splunk Enterprise Security, IBM QRadar, Palo Alto Cortex XSOAR) aggregate telemetry and use ML to correlate alerts into actionable incidents. Network detection and response tools — Darktrace, Vectra AI, ExtraHop Reveal(x) — monitor east-west traffic for lateral movement and command-and-control beaconing. Next-generation firewalls from Palo Alto, Fortinet, Check Point, and Cisco integrate ML-based inspection with global threat intelligence feeds.

Most enterprises with hybrid environments layer NDR for network visibility with EDR for endpoint coverage, feeding both into a SIEM. The specific platform choice depends on existing stack and primary detection surface.

Emerging trends: autonomous defense, federated learning, and quantum-resistant cryptography

Autonomous response is the near-term frontier. Rather than flagging a suspicious process for human review, autonomous systems isolate endpoints, revoke credentials, and block IPs without waiting for analyst approval. IBM X-Force projects that as multimodal AI matures, adversaries will automate complex reconnaissance and ransomware chains. When that happens, human-speed response won’t be sufficient. This is already happening at the low end of the sophistication spectrum, which is worth paying attention to.

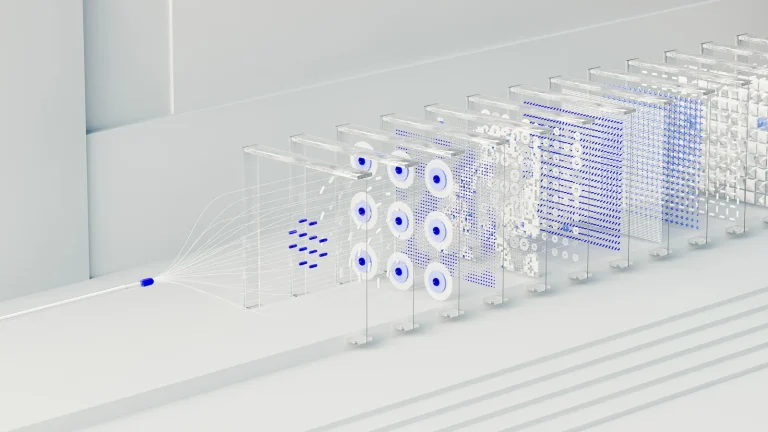

Federated learning addresses a specific problem in threat intelligence: organizations want to share threat data to improve collective detection, but can’t expose raw logs to third parties. Federated learning lets them share gradient updates instead of data, which is particularly useful in healthcare and finance where data sovereignty is non-negotiable.

Further out, AI is being used for quantum-resistant cryptography migration. NIST finalized its first post-quantum cryptographic standards in 2024; AI tools can now audit cryptographic implementations at scale and prioritize migration roadmaps. The NIST AI Risk Management Framework and the EU AI Act give security teams governance structures for deploying AI tools in ways that are auditable and compliant.

Here’s the number worth sitting with: AI-powered security detects breaches 108 days faster and cuts costs 43%, yet 87% of organizations were still hit by AI-driven attacks in the past year. Deployment pace hasn’t matched threat escalation pace. The concrete step: benchmark your current MTTD and MTTR against IBM’s AI-protected average ($2.54M breach cost), and use that gap to make the budget case.

Frequently asked questions

What is artificial intelligence in cybersecurity?

AI in cybersecurity applies machine learning, behavioral analytics, and automation to detect, prevent, and respond to cyber threats faster and at greater scale than human analysts alone.

How does AI improve threat detection?

AI models learn normal behavior baselines for users and systems, then flag deviations in real time — detecting threats 108 days faster than traditional signature-based methods, per 2025 IBM research.

What are the risks of AI in cybersecurity?

AI enables attackers to automate phishing at scale (82.6% of phishing emails now use AI), clone voices in 3 seconds, and accelerate vulnerability exploitation — as documented in the IBM X-Force 2026 Threat Index.

Can AI replace cybersecurity professionals?

No. AI handles routine monitoring and initial triage, but complex incident investigation, threat hunting, and governance decisions require human expertise. David Cass of TD Securities noted “AI is solving lower-level problems, but it is not a replacement.”

How big is the AI cybersecurity market?

The global AI cybersecurity market was $29.64 billion in 2025 and is projected to reach $167.77 billion by 2035, growing at an 18.93% CAGR, according to Precedence Research.