Large enterprises now ingest more than 10 terabytes of log data every day — endpoints, cloud services, SaaS tools, and operational technology networks all generating records that a security team is supposed to analyze for threats. Nobody reads 10 terabytes manually. Big data security intelligence is the practice of applying machine learning, streaming analytics, and AI-assisted investigation to that data volume to detect threats faster than any human review process could. The market built around this problem — security information and event management (SIEM), security analytics, and user and entity behavior analytics — was valued collectively at over $18 billion in 2026 and is growing faster than the broader cybersecurity market. This piece covers how the technical approach works and what the leading platforms are doing with it.

- Large enterprises ingest 10+ TB of security log data daily; Microsoft Sentinel events surged 150% year-over-year in 2025

- SIEM market: $12.06B in 2026, growing to $20.78B by 2031 at an 11.5% CAGR

- Security analytics market: $6.72B in 2025, projected to reach $15.73B by 2034 at 9.91% CAGR

- Databricks entered the SIEM market in 2026 with Lakewatch, an open agentic SIEM built on Data Intelligence Platform

- UEBA uses ML to continuously refine behavioral baselines and flag deviations — unusual login times, abnormal data transfers, unexpected access patterns

How Big Data Powers Security Intelligence

The Scale Problem: 10 TB/Day and Growing

The volume problem in security analytics is structural. Every device, cloud service, network appliance, and SaaS application generates event logs. In an enterprise with 10,000+ employees, security teams are now ingesting over 10 terabytes of log data daily. Microsoft reported that events processed by its Sentinel SIEM surged 150% year-over-year during 2025 — not because attacks increased 150%, but because the number of connected systems and services generating logs expanded that fast. The security analytics challenge is not shortage of data. It’s figuring out which events in those 10 terabytes indicate actual adversary activity versus normal noise.

Traditional SIEM platforms were built to aggregate and search this data, not to reason about it. The shift to big data security intelligence is the shift from keyword-matching against known indicators of compromise (IOCs) to machine learning models that build baselines of normal behavior and detect deviations. The difference matters because 82% of enterprise intrusions in 2026 involved no malware — attackers used valid credentials and legitimate tools (CrowdStrike 2026 data). Signature-based detection misses these entirely. Behavioral analytics, built on big data infrastructure, is the primary mechanism for catching them.

Machine Learning and UEBA in Security Analytics

User and Entity Behavior Analytics (UEBA) is the ML-driven core of modern big data security intelligence. UEBA models continuously build statistical baselines for every user, device, and service in an environment: when they typically log in, which systems they access, what volume of data they transfer, what times they are active. When activity deviates from baseline — a user logging in at 3am from an unfamiliar location, a service account suddenly accessing a large number of files, a device communicating with an external IP it has never contacted — the model generates an anomaly score rather than a binary alert.

The practical advantage of anomaly scoring over binary alerting is precision. Traditional SIEM rules generate thousands of alerts per day that analysts can’t review. UEBA-based systems surface the highest-risk behavioral deviations and present them with context: what changed, how far the deviation is from baseline, what other correlated signals exist. This model is now standard in enterprise-grade platforms including Microsoft Sentinel, Splunk Enterprise Security, IBM QRadar, Exabeam, and Securonix. The underlying role of artificial intelligence in cybersecurity has made UEBA the default architecture for high-volume enterprise threat detection rather than a specialty add-on.

SIEM Market Evolution: From Log Aggregation to AI-Native Platforms

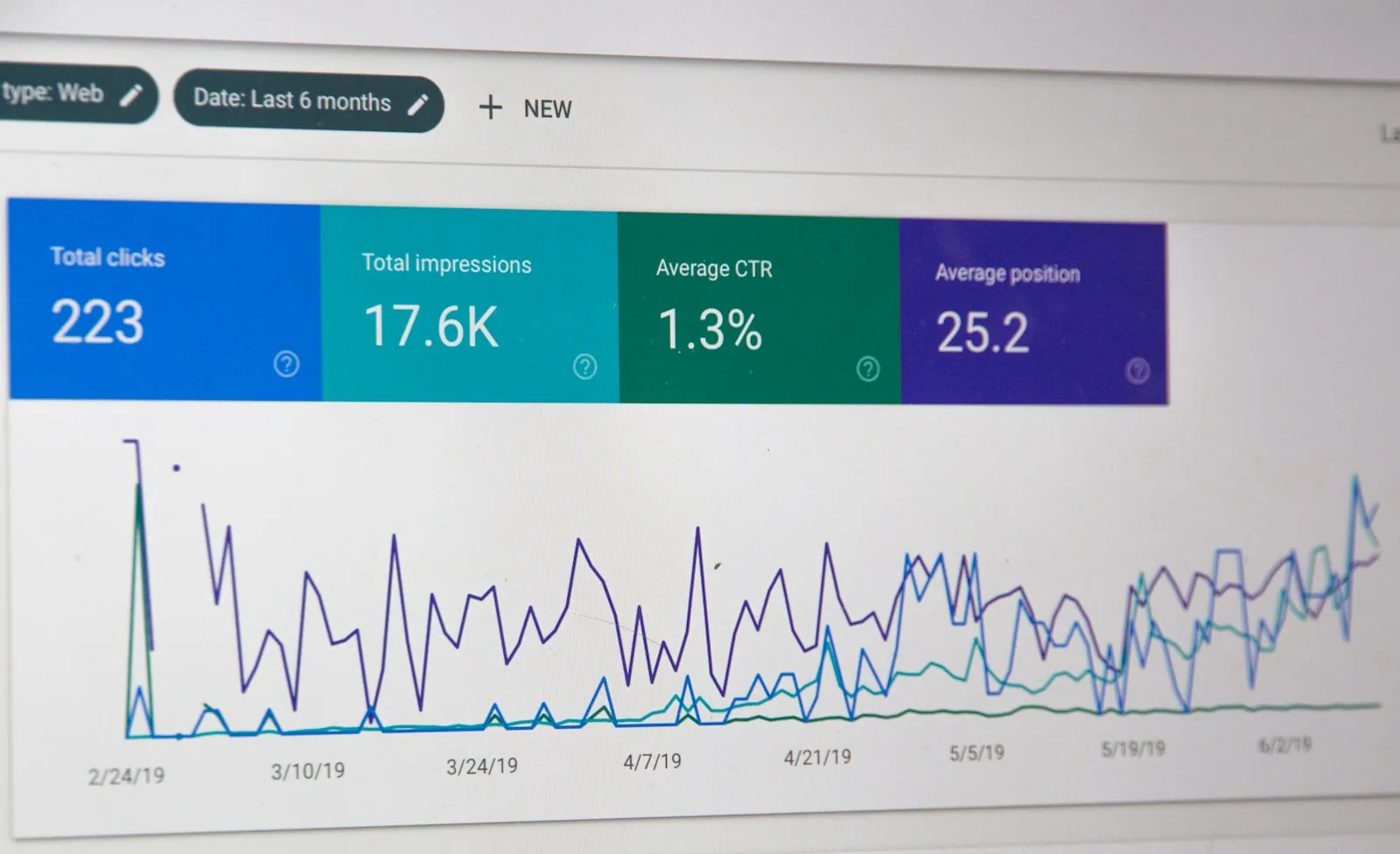

The SIEM market was valued at $12.06 billion in 2026 and is projected to reach $20.78 billion by 2031 at an 11.5% CAGR, according to Mordor Intelligence research. The security analytics market — broader than SIEM, including security data lakes and AI-driven investigation platforms — was valued at $6.72 billion in 2025 and is projected to reach $15.73 billion by 2034. Combined, cybersecurity spending across all categories is projected to surpass $520 billion annually by 2026, with security analytics and big data infrastructure capturing a growing share as detection requires more compute and storage than traditional on-premise SIEM can provide.

The platform evolution has moved through three generations. First-generation SIEM: log aggregation and rule-based alerting. Second-generation: UEBA, correlation engines, and threat intelligence integration. Third-generation (current): cloud-native or lakehouse architectures, AI-assisted triage, agentic investigation, and natural language querying. The third generation is what makes big data security intelligence operationally different from its predecessors — not just storing and searching large datasets but AI systems reasoning over them in real time. The AI security tools landscape now spans all three generations depending on platform and deployment.

Big Data Security Intelligence in Practice: Platforms and Use Cases

Databricks Lakewatch and the Open Lakehouse SIEM Architecture

In 2026, Databricks entered the security market with Lakewatch, an open agentic SIEM built on the Databricks Data Intelligence Platform. The announcement is significant because it represents a different architectural approach to big data security intelligence: instead of building a separate SIEM data store, Lakewatch brings security analytics to where enterprise data already lives — in the data lakehouse. Organizations that already use Databricks for their data engineering and ML workloads can run security analytics on the same platform, eliminating the data movement and storage duplication that traditional SIEM architectures require.

The “agentic” component means Lakewatch uses AI agents to investigate alerts, correlate signals across data sources, and generate investigation summaries — reducing the manual investigation workload on security analysts. This approach is part of a broader pattern: CrowdStrike’s Charlotte AI, IBM’s ATOM, Splunk’s AI-assisted triage, and now Databricks’ Lakewatch all apply AI agent capabilities to the investigation step that has historically been the analyst bottleneck. The question for enterprises is not whether to use AI-assisted security analytics but which architecture fits their existing data infrastructure.

Key Use Cases: Threat Hunting, Fraud Detection, and Insider Threats

Big data security intelligence platforms support three primary use cases beyond reactive alerting. Threat hunting uses the full historical dataset — weeks or months of behavioral baselines — to proactively search for threat actor patterns that existing rules haven’t surfaced. When the CrowdStrike 2026 report documented VOLT TYPHOON pre-positioning inside US critical infrastructure, threat hunters at targeted organizations could retroactively search for the specific TTPs (techniques, tactics, and procedures) that VOLT TYPHOON uses, even if no alerts had fired. That kind of investigation requires big data infrastructure — querying months of events across all data sources at analytical speed.

Fraud detection in financial and e-commerce environments uses the same UEBA infrastructure to detect account takeover, synthetic identity fraud, and payment anomalies. The behavioral baseline built for security purposes (detecting account compromise) doubles as a fraud signal when a user’s spending pattern or transaction type deviates sharply from history. Insider threat detection is the third major use case: correlating HR events (performance issues, termination notices), access pattern changes, data transfer volume increases, and after-hours activity to identify employees who may be preparing to exfiltrate data — a threat that signature-based tools cannot detect at all.

Building a Big Data Security Intelligence Program

Effective programs share a few architectural decisions that determine whether the data volume investment produces security value. First, data completeness: a UEBA model is only as accurate as the data it trains on. Gaps in log collection — uncollected endpoint data, cloud services with no API integration, OT/IoT networks with no visibility — create blind spots that attackers specifically target. Second, retention policy: meaningful threat hunting requires 90–365 days of log retention. Many organizations compress or delete logs after 30 days for cost reasons, eliminating the historical context that makes retrospective hunting possible. Third, detection coverage: organizations should regularly test whether their analytics rules and ML models would detect the top adversary TTPs documented in annual threat intelligence reports.

The practical gap for most organizations is not the platform — all major SIEM and analytics platforms can process the data volume. It is the coverage and integration work required to get comprehensive, clean data into the platform in the first place. The enterprise threat intelligence layer that provides adversary context to the analytics platform is the second critical ingredient. Big data without intelligence about what to look for produces accurate baselines and still misses targeted attacks.

Frequently Asked Questions

What is big data security intelligence?

Big data security intelligence is the application of machine learning, behavioral analytics, and AI-assisted investigation to the large volumes of security event data that enterprise environments generate. It encompasses SIEM platforms, UEBA models, security data lakes, and agentic investigation systems that process terabytes of log data to detect threats faster and more accurately than rule-based approaches.

How much security log data do enterprises generate?

Large enterprises with 10,000+ employees now ingest over 10 terabytes of log data daily from endpoints, cloud services, SaaS applications, and network devices. Microsoft reported a 150% year-over-year surge in events processed by its Sentinel SIEM in 2025, driven primarily by the expansion of connected systems and services generating logs.

What is UEBA and how does it use big data?

User and Entity Behavior Analytics (UEBA) uses machine learning models to build statistical baselines of normal behavior for users, devices, and services — login times, access patterns, data transfer volumes, and communication patterns. When activity deviates from these baselines, UEBA generates anomaly scores rather than binary alerts, enabling detection of credential-based and malware-free intrusions that traditional signature detection misses.

What is Databricks Lakewatch?

Lakewatch is an open agentic SIEM launched by Databricks in 2026, built on the Databricks Data Intelligence Platform. It brings security analytics to where enterprise data already lives in the data lakehouse, eliminating the data duplication of traditional SIEM architectures. AI agents in Lakewatch investigate alerts, correlate signals, and generate investigation summaries to reduce analyst workload.

How large is the SIEM and security analytics market?

The SIEM market was valued at $12.06 billion in 2026 and is projected to reach $20.78 billion by 2031 at an 11.5% CAGR. The broader security analytics market was valued at $6.72 billion in 2025 and is projected to reach $15.73 billion by 2034. Overall cybersecurity spending is projected to surpass $520 billion annually by 2026.